There is no single best AI for every medical question. The strongest current evidence suggests that the answer depends on the type of question being asked, the questioner, and whether protected health information is involved. In benchmark-style assessments, specialized medical systems and top general-purpose models often outperform older chatbots. Still, real-world safety, misinformation resistance, governance, and HIPAA readiness can mean just as much as raw accuracy. That is why “best” in healthcare does not merely mean the quality of the answer. It also means the best fit for privacy, oversight, and risk.

Understanding the question

When people ask which AI is best for medical questions, they are often conflating several distinct use cases. A consumer asking about symptoms, a clinician looking for differential diagnoses, a hospital assessing AI copilots, and a compliance officer reviewing HIPAA exposure are not asking the same thing. JAMA’s 2025 summit report on AI in health makes this distinction clear by separating tools used by patients, clinicians, and health systems, while OpenAI’s HealthBench was built specifically because older medical benchmarks did not depict realistic health conversations well enough.

A recent Nature Medicine study found that although some AI models performed very well when tested in isolation, people using them to make health decisions did no better than standard internet searches or official health websites. Reuters’ summary of the research reported that users identified the correct condition only 34.5% of the time and selected the correct action 44.2% of the time, roughly in line with traditional tools.

What current research says about the top performers

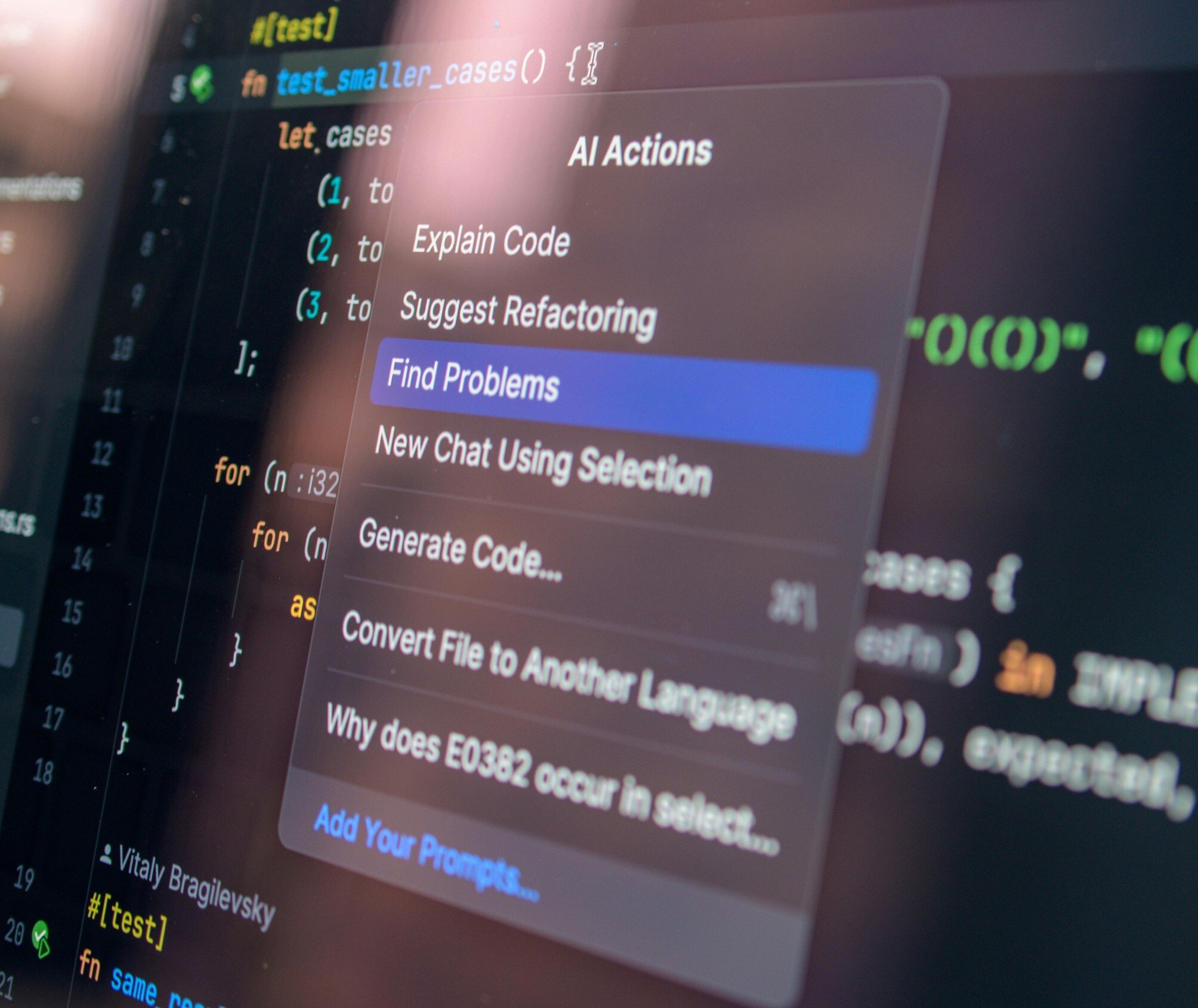

Recent reporting by Forbes points to a Stanford-Harvard safety benchmark known as NOHARM, where AMBOSS LiSA 1.0 was reported as the top overall performer, and a multi-agent setup combining Llama 4 Scout, Gemini 2.5 Pro, and LiSA performed best among mixed systems. The underlying NOHARM benchmark describes 100 real consultation cases across 10 specialties and found that severe harm still occurred in up to 22.2% of cases across the 31 models tested, showing that even the strongest systems are not uniformly safe.

General-purpose frontier models also perform strongly on broad healthcare benchmarks. OpenAI reports that HealthBench was built with 262 physicians from 60 countries and includes 5,000 realistic health conversations scored against physician-written rubrics, indicating that evaluation is moving away from exam-style multiple-choice questions toward more realistic patient-clinician interactions. It’s useful because older benchmarking styles can make systems appear more clinically ready than they are.

At the same time, strong scores do not settle the question. A 2025 network meta-analysis published in JMIR found meaningful variation across models answering clinical research questions, while a 2025 NEJM AI paper reported that models can match or exceed learners on some structured reasoning tasks, yet still underperform relative to expert clinicians in important ways. The pattern across the literature is consistent: some models are very capable, however performance depends heavily on task design, prompting, and the real-world setting in which the AI is used.

Why specialized medical AI often has an edge

Specialized medical systems tend to perform better when the task requires structured clinical knowledge, awareness of guidelines, or a lower tolerance for unsafe improvisation. That is one reason AMBOSS LiSA scored so well in the NOHARM reporting. Specialized tools typically rely on curated medical sources and narrower design goals rather than attempting to be all-purpose assistants.

That does not mean general chatbots are useless. It means their strength is often breadth, flexibility, and conversational fluency rather than dependable clinical grounding. Research continues to show that medical accuracy is only one part of the problem. Systems also need to ask follow-up questions, handle uncertainty well, avoid overconfidence, and communicate risk clearly. A 2025 study in JAMA Network Open comparing four major LLMs on medical probability language found that even advanced systems can vary substantially in how they interpret and explain uncertainty to patients.

Why the HIPAA angle changes the answer

From a HIPAA standpoint, the best AI for medical questions is the one that can be used without putting ePHI at unnecessary risk. HHS states that a covered entity or business associate may use a cloud service to store or process ePHI only if a HIPAA compliant business associate agreement is in place and the organization continues to perform its own risk analysis and risk management. That means a public chatbot without a BAA is not automatically appropriate for handling patient-identifiable medical questions within a regulated workflow.

HHS also makes clear that de-identification makes a difference. The HIPAA Privacy Rule recognizes two methods for de-identification: Expert Determination and Safe Harbor. In practice, this means an organization may be able to use AI more safely for certain medical question workflows if the data is properly de-identified before it is sent to the model, however this is not the same as pasting raw patient details into a consumer AI tool.

Another point that is often overlooked is that HHS does not endorse or certify specific cloud vendors or AI tools as “HIPAA compliant.” OCR’s cloud guidance states that a CSP may be used if the contractual and safeguarding requirements are met. Still, the regulated entity remains responsible for understanding the environment, conducting risk analysis, and ensuring that access and safeguarding obligations are adequately addressed. In other words, “HIPAA compliant AI” is not a badge; it is a governance and implementation issue.

Why safety and misinformation mean as much as accuracy

A model can answer many medical questions well and still be a poor choice in a healthcare setting if it is too easy to mislead. Reuters reported on a 2026 Lancet Digital Health study showing that medical misinformation was more likely to fool AI systems when it appeared in realistic-looking hospital discharge notes than when it came from social media. The researchers found overall misinformation acceptance around 32%, increasing to nearly 47% when the false information appeared in authoritative-looking clinical notes. OpenAI’s GPT models were reported as the least susceptible in that study, however no model category was immune.

That finding is important for HIPAA-regulated healthcare because medical AI is often deployed within systems that handle highly sensitive internal information. A model that appears polished remains susceptible to poor or misleading clinical context and can pose a risk, even if it appears impressive in demonstrations. Joint Commission and CHAI’s 2025 guidance shows this by placing patient privacy, data security, ongoing quality monitoring, safety event reporting, risk and bias assessment, and training at the center of responsible AI governance in healthcare.

So which AI is best?

Regarding the quality of general medical questions, the current evidence points to a small set of leading systems rather than a single universal winner. Specialized medical AI, such as AMBOSS LiSA, appears to lead some safety-focused benchmarks. In contrast, top general-purpose models, such as GPT-class and Gemini-class systems, remain strong on broad health conversation benchmarks, and, in at least one study on misinformation, GPT models were the most resistant to false claims.

For consumer symptom questions, the answer is less flattering. The latest real-world studies suggest that people do not reliably obtain better health decisions from chatbots than from conventional search and official health sources, especially when chatbots provide incomplete information or misinterpret responses. That means the best consumer AI for medical questions is still not a substitute for clinical care, particularly in urgent or ambiguous cases.

For HIPAA-regulated healthcare use, the best AI is the one that combines strong clinical performance with a BAA-capable deployment model, defined data-use restrictions, risk analysis, monitoring, governance, and, where appropriate, de-identification. A powerful public chatbot without those controls may be impressive, although it is not automatically the best tool for a covered entity or business associate handling patient data.

Why HIPAA compliant communication still matters

Even the most advanced medical AI tools still operate within a broader communication environment that includes email, patient records, portals, and messaging systems. That means HIPAA considerations do not stop with the AI model itself. When patient data moves through email or other cloud-based workflows, healthcare organizations must still apply safeguards required under the Privacy and Security Rules. Platforms like Paubox can help secure email communication within that larger ecosystem without adding extra steps for patients or staff. Regulatory oversight is also increasing. OCR’s 2024 to 2025 HIPAA audits are focusing on Security Rule provisions most closely linked to hacking and ransomware, indicating that AI adoption in healthcare is happening alongside tighter cybersecurity expectations.

FAQs

Is there one best AI for all medical questions?

No. The best tool depends on whether the question is consumer-facing, clinician-facing, or part of a regulated healthcare workflow. Current evidence suggests that specialized medical AI may lead in some safety benchmarks, whereas frontier general-purpose models remain competitive across broader health tasks.

Are public AI chatbots safe to use for patient-specific questions?

Not automatically. If protected health information is involved, covered entities and business associates must implement a HIPAA compliant system, including, where required, a business associate agreement, a risk analysis, and appropriate safeguards. Public access alone does not make a tool appropriate for PHI.

Does higher benchmark accuracy mean an AI is clinically safe?

No. The NOHARM benchmark found that even strong models could still produce severely harmful medical advice at meaningful rates, and other research shows real-world patient use can perform much worse than lab testing.

Can de-identified data be used more safely with AI?

Potentially, yes. Only if the data is de-identified in line with HIPAA’s standards. HHS recognizes Safe Harbor and Expert Determination as the two methods for de-identification under the Privacy Rule.

What should healthcare organizations ask vendors before using AI for medical questions?

They should ask about BAAs, data retention, model training on customer data, access controls, auditability, monitoring, human oversight, incident reporting, and how the tool handles safety, bias, and misinformation. Those expectations line up with HHS cloud guidance and Joint Commission-CHAI governance guidance.

Subscribe to Paubox Weekly

Every Friday we bring you the most important news from Paubox. Our aim is to make you smarter, faster.