5 min read

When using AI before calling your lawyer puts your organization at risk

Gugu Ntsele May 11, 2026

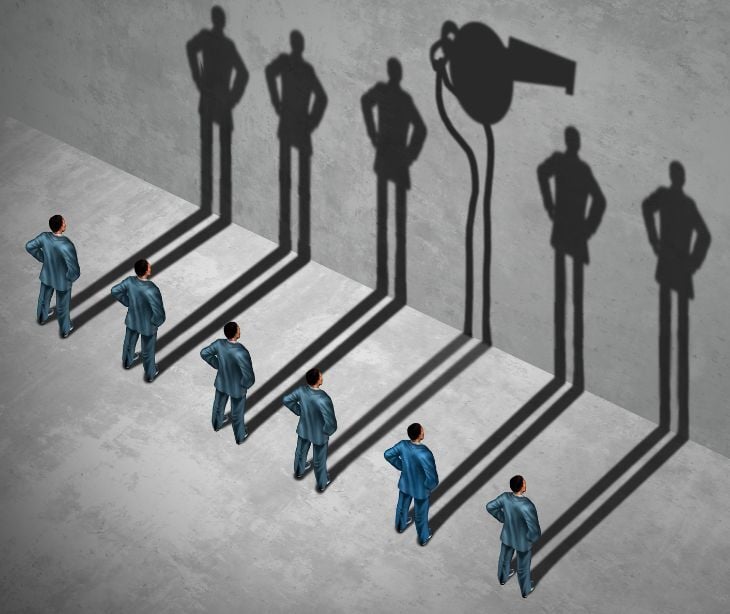

Artificial intelligence tools are changing the way healthcare organizations handle compliance work, such as breach investigations, audit preparation, analysis and more. As the Harvard Gazette reported, whenever organizations enter what experts call a "race dynamic," the pressures of time and urgency make it easier to overlook ethical and legal issues. However, as adoption increases, a legal question arises, does using AI in these workflows undermine the protections hospitals rely on to keep sensitive information out of the courtroom? A recent federal ruling notes that the answer may be yes and that healthcare covered entities face exposure.

In United States v. Heppner (S.D.N.Y. 2026), Judge Jed S. Rakoff issued a bench ruling holding that documents prepared using generative AI were not protected by attorney-client privilege or the work product doctrine. As BakerHostetler reported in its February 2026 client alert, the court found that privilege was waived when the defendant shared information with a third-party AI platform, breaking the confidentiality that privilege requires.

Why healthcare covered entities are exposed

Unlike other industries, covered entities routinely feed protected health information (PHI) into AI platforms for compliance purposes such as breach investigations, workforce misconduct reviews, 340B audit preparation, prior authorization pattern analysis.

According to an article published by Medical Economics, 88% of healthcare organizations use cloud-based generative AI tools, 98% use apps that incorporate generative AI features, and 71% of healthcare workers are still using personal AI accounts for work. Since most public AI tools like ChatGPT and Google Gemini do not sign business associate agreements or meet HIPAA compliance standards, using them with PHI is a potential violation.

As Professor Craig Konnoth observes in the Research Handbook on Health, AI and the Law, the United States has taken a sectoral approach to protecting health data privacy, applying different rules depending on who holds the data rather than on the nature of the data itself. AI complicates this because it draws inferences that were never present in the original dataset and can be used in ways that cut across multiple regulatory contexts. A compliance workflow that is HIPAA compliant at every step can still produce documents that are fully discoverable. HIPAA and attorney-client privilege are not alternatives.

Read also: 5 HIPAA violations caused by improper AI use

What the courts are saying

In Heppner, the defendant created 31 AI-generated documents on personal devices to facilitate discussions with his lawyers. The government successfully argued that privilege cannot be retroactively conferred simply by transmitting AI-generated materials to counsel after their creation, and a public-facing AI platform owes no duties of loyalty or confidentiality to its users. The court also rejected work product protection because the AI platform was "plainly not an attorney" and had not been used at counsel's direction.

For healthcare compliance teams this means that if AI-generated compliance reports are stored in shared operational systems, accessed by non-legal personnel, or referenced in board materials before legal counsel is engaged, a regulator or opposing counsel may argue those documents are ordinary business records subject to full discovery.

Why privilege doctrine resists AI

Writing in Against an AI Privilege, Professor Ira P. Robbins of American University Washington College of Law identifies four elements that every recognized privilege shares:

- A trusting human relationship

- An enforceable duty of confidentiality on the recipient

- Communications made for the protected purpose

- A public interest sufficient to outweigh lost evidence

His central argument is that AI interactions satisfy none of them. AI providers sometimes commit to privacy in terms of service, but those changeable contracts lack the professional enforceability of bar or licensing obligations. A business associate agreement (BAA) that satisfies 45 CFR § 164.504(e) does not make an AI vendor into a privileged conduit. Robbins explains that a BAA is a data processing contract, not a professional relationship giving rise to fiduciary duties.

The Kovel problem for healthcare AI

Healthcare compliance professionals can argue that an AI compliance tool performing technical analysis of access logs to inform legal advice fits the Kovel doctrine, drawn from United States v. Kovel (1961), which extends privilege to non-lawyer third parties who serve as interpreters of technical information for the attorney. Robbins addresses and rejects that argument by stating that Kovel extended privilege to an accountant only because the accountant was acting as a conduit enabling the lawyer to render legal advice. The Second Circuit in United States v. Ackert refused to extend privilege to communications with a banker whose advice was not in service of counsel's legal judgment. As Robbins puts it, the tool cannot create the relationship, the relationship must justify the tool.

The HIPAA-privilege gap: two frameworks that do not overlap

When a covered entity feeds PHI into a third-party AI compliance platform, it must identify a permissible legal basis for that disclosure under 45 CFR § 164.502(a). Even where PHI can lawfully be shared, the minimum necessary standard under §§ 164.502(b) and 164.514(d) requires covered entities to use or disclose only the minimum amount of PHI needed. A compliance officer who inputs access log datasets into an AI platform must be able to demonstrate the volume shared was the minimum necessary.

A BAA under § 164.502(e) and § 164.504(e)(2) must establish permitted uses of PHI, prohibit unauthorized disclosures, require appropriate safeguards, and obligate the vendor to report breaches. For AI compliance vendors, the BAA determines whether the AI tool is operating as an agent of the covered entity or as an independent third party, a distinction that matters for both HIPAA compliance and the privilege analysis.

A disclosure that is HIPAA compliant as a health care operation under § 164.506 can still destroy privilege. Konnoth's analysis reinforces that a disclosure may be lawful under § 164.506 and still fall outside recognized privilege doctrine.

Re-identification risks

Konnoth notes that AI systems can undermine even HIPAA's de-identification protections, stating that AI reduces the already-weak power of de-identification to protect health privacy by making it easier to re-identify patients, either individually or at scale. A covered entity that de-identifies data before feeding it into an AI platform may not achieve the protection it expects, the AI may draw inferences that effectively re-identify individuals, creating both a HIPAA exposure and a privilege complication. The Medical Economics article adds that 96% of the cloud-based AI tools used in healthcare train on user data, meaning de-identified data may nonetheless contribute to model outputs that surface identifiable patterns.

Read also: Can de-identified data be used to train AI under HIPAA?

Privilege laundering

Robbins raises the concern that recognizing broad AI privilege would create what he calls a "privilege laundering" problem, the ability to route sensitive material through an AI interface and then invoke privilege to block discovery. For healthcare covered entities, if a hospital uses an AI platform to generate a compliance report on a potential breach, then shares that report with counsel and asserts privilege, it has created exactly the kind of after-the-fact privilege claim that Heppner rejected. BakerHostetler's analysis confirms that courts are not receptive to after-the-fact privilege assertions over AI-generated materials.

Implications for covered entities

The safest approach is to initiate AI-assisted exposure assessments after and not before legal counsel is engaged, and that the engagement is documented. Counsel should formally retain or direct the use of the AI tool, with written communication establishing that the analysis is being conducted in anticipation of litigation or regulatory proceedings under 45 CFR Part 160, Subpart C.

One risk to note is that if AI-generated compliance reports are stored in shared operational systems, accessed by non-legal personnel, or referenced in board materials before legal counsel is engaged, a regulator or opposing counsel may argue those documents are ordinary business records subject to full discovery.

BakerHostetler's guidance on Heppner specifically recommends that companies establish policies and procedures governing AI use in investigations and litigation, and that counsel actively direct and supervise AI use in real time when it is deployed in connection with legal proceedings. Those internal policies should be codified under 45 CFR § 164.530(i), which requires covered entities to implement and maintain written policies and procedures to comply with the Privacy Rule's applicable standards.

Need-to-know terms

Attorney-client privilege - A legal protection that keeps communications between a client and their lawyer private and out of court.

Work product doctrine - A rule that protects documents a lawyer creates while preparing for a case from being seen by the other side.

Kovel doctrine - A rule that extends attorney-client privilege to outside experts (like accountants) when they help a lawyer understand technical information.

Privilege laundering - The practice of sending sensitive information through a third party (like an AI tool) to try to claim it is legally protected from discovery.

Covered entity - Under HIPAA, a health plan, healthcare clearinghouse, or healthcare provider that transmits health information electronically.

Business associate agreement (BAA) - A contract required by HIPAA when a covered entity shares PHI with a vendor, specifying how that data may be used and protected.

Minimum necessary standard - A HIPAA requirement that covered entities use or disclose only the minimum amount of PHI needed to accomplish the intended purpose.

Discovery - The legal process where both sides in a lawsuit must share relevant documents and information with each other.

Sectoral approach - A US privacy law model where protection depends on what industry holds the data, not on what type of data it is.

Subscribe to Paubox Weekly

Every Friday we bring you the most important news from Paubox. Our aim is to make you smarter, faster.